(it was like this for five seconds but then I couldn’t find anything)

reads

Drought!

Tool used: D3.js; data from the Drought Monitor

Growing up in coastal California it’s easy to forget the truth: it’s weird that we can live here at all. We owe our survival to massive hydraulic works—aqueducts and siphons and weirs bringing us water from Hetch Hetchy or the Oroville or the Colorado River.

After three years of debilitating drought, these aren’t enough. The reservoirs are dwindling, crops are being left to die, the NYT is calling us apathetic, and towns are running out of drinking water.

―• ――

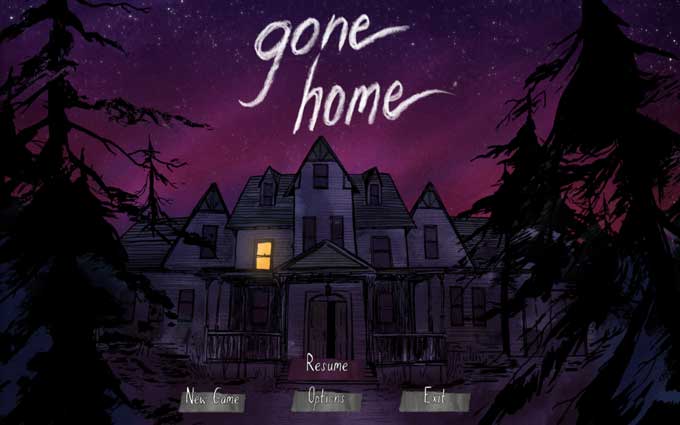

Gone Home

Thing practiced: liking a thing

Not sure how or why, but I found myself in an hour-long argument with a bright and palpably earnest young man about video games, their import, and who may or may not be ruining them for everyone. What prompted this was an out-loud comment I made about last year’s Gone Home, so I figured it was worth writing about the game, plus some other things I’ve enjoyed recently.

Gone Home

The Fullbright Company, Aug 2013

Gone Home: Polygon’s 2013 Game of the Year. Recipient of glowing reviews in the New York Times, Kotaku, the Atlantic, and umpteen other outlets of opinion. VGX’s Best Indie Game and Best PC Game of 2013; winner of the Best Debut awards at both BAFTA and GDC 2014; Games for Change 2014’s Game of the Year. Also: the recipient of user reviews calling it a “2deep4u indie hipster walking simulator” and “an interactive chick story” that “sucked balls like ICO,” and “quite possibly the worst game of all time.” Why do people feel so strongly? Why might you care?

I grew up in suburban California in the ’90s, and like everyone my age I dropped everything to obsess about Pokémon. Thanks to crippling social awkwardness and my brother outgrowing his N64, I kept playing games—first finishing Ocarina of Time and Mario 64, then spending way more time with SNES emulations and gaming IRC rooms and BBSes than a kid probably should.

When I got older, video games seemed like the perfect medium for art. Games marry visual art, music, and written fiction: all things I loved. But beyond that, video games involve the gamer—they force you to play a part in creating the art. This makes games expressive in a way other media can’t be. What video games deliver is experience itself—and if art is about evoking a response in the viewer, what could be more effective?

As a gamer, though, I hit a dead end. I couldn’t make the transition from kid games to “real” games. Nothing resonated the way Zelda or Chrono Trigger had. The popular games all seemed to be about something else.

It’s not a coincidence that big-budget games both revolve around empowerment and are marketed mainly to teenagers and very young adults, the demographic groups most likely to feel frustrated and powerless. These games let people do all the stuff they can’t in real life: drive hot cars, get hot girls, shoot guys in the face, be the hero. Domination and destruction will always be appealing—and some of these games are masterpieces. As representatives of a whole art form, though, they cover a narrow range. We’re handed adrenaline, victory, and good-vs-evil times twenty, but what about empathy? alienation? romance?

That’s why it’s so heartening to play Gone Home.

It’s hard to write concretely without spoiling it, but I’ll try. Gone Home is short: roughly movie-length on a typical playthrough. You’re dropped onto a porch on a dark and stormy night, and you walk around examining things, piecing together a story from the fragments you find. All you have is a window into a 3D world—you can’t see yourself, there’s no health meter, no number of lives. There are no character models, no cutscenes, no puzzles; there’s no combat, no story branching, no fail state. It’s the opposite of high-octane.

But it’s spellbinding. As you probe the intricately-crafted spaces, each element lures you in. The art is sumptuous and hypnotic, and the voice acting is exquisite. It’s all just right—the music, the lighting, each squeak of a floorboard and clack of a light switch—multilayered and cohesive, like when someone’s fingers intertwine perfectly with yours. And Gone Home stays playful throughout: witness interactive food items, endlessly flushable toilets, the inexplicable omnipresence of three-ring binders. If you like, you can heap a pile of toiletries on your parents’ bed, or turn on all the faucets. If you’re scared, you can carry a stuffed animal.

It works. Not because it’s high-concept, but because it’s deeply human. For me, at least, the game unearthed some long-repressed feelings—anxiety, ostracism, the thrill and poignance of a first love; how everything then is either exhilaration or heartbreak.

More than that, though, Gone Home shows that a game can simply tell a story. A story anyone can take part in, one where someone new to games won’t die instantly.

If you’ve ever been intrigued by video games, whether you’re a gamer or not, you should try Gone Home. If you don’t have $20, let me know and you can play it here. If you have $20 but you’re not sure about spending it, try thinking of it as an investment in the Fullbright Company, and in the future of games.

(And if you’re a longtime gamer worried that an influx of new people will dilute gaming culture, qq: Have you ever been shunned and called names by people whose approval and acceptance are important to you? Me too. It sucks. Please don’t be that person.)

Re: Swift

Thing practiced: overenthusing

Depending on your level of nerdiness, you may have heard Apple’s announcement today that a team of mutant programming virtuosos has been working—in secret, for years—on a new language that will replace Objective-C as the lingua franca of the iPhone, iPad, and Mac – plus presumably whatever Apple televisual, home-automating, and/or wearable products are to come. The circle is at last complete.

It’s called Swift—and, if you’re interested, Apple has released a 500-page ebook detailing the language to let developers get started today.

Technically, Swift has some nice characteristics:

It’s fast. Like C, C++, and Objective-C, Swift compiles down to native code—there’s no VM or interpreter slowing things down at run time. Coupled with some serious compiler optimization, this should make Swift performant enough for any size of application.

It’s safe. Unlike Objective-C, Swift code is proven safe at compile time—that is, no compiled code can cause an access violation at run time (unless we explicitly mark our code as unsafe).

It has first-class functions. Functions can be nested within other functions, passed as arguments to other functions, and stored as values.

It has closures.

Along with the code for a function, Swift stores the relevant parts of the environment that was current when the function was created. (This makes higher-order functions like sort and fold possible and powerful.)

It supports both immutable values and variables.

Languages usually choose one or the other, but Swift has both—just type let for immutable values and var for (mutable) variables.

Swift is statically-typed, but types can be inferred. If the type-checker can tell what type the value/variable should be, we don’t have to write it.

And types can be generic.

Functions and methods are automatically generalized by the type-checker, so our functions can be used across compatible parameter types—without manually writing overloads, and without resorting to id and using casts everywhere.

Swift gives us namespaces, with its module system.

Memory is managed automatically, and without pointers.

Like Objective-C post-ARC, Swift uses compiler magic to track and deallocate instances as needed. We don’t need to explicitly specify strong vs. weak references any more, or use * for reference types. This makes iOS programming more accessible (pro/con), though not as easy as with garbage collection. And since ARC happens at compile time, there’s no performance hit at run time.

Swift has tuples (or product types). We can group multiple values into one compound data type, without using container classes/structs.

It has very-special enums (or sum types). A Swift enum contains a value of one of various specified types. We can use these like plain C integer enums if we like, but Swift enums go further: Swift lets each enum type carry data.

It has pattern-matching to go with tuples and enums. Values can be matched against patterns to decompose them concisely.

And much more: lazy properties, property observers, class/struct extensions, buffed-up structs, option types, function currying, type aliasing, and more. And Swift manages to do all this while maintaining smooth interoperability with Objective-C classes.

(For a trivial demo of some of these features, here’s code for Minesweeper in Swift.)

With ideas drawn (in Chris Lattner’s words) from Haskell, Rust, C#, Ruby, Python, and the last twenty years of PL research and practice, Swift looks like a language even programming-languages nerds should find pleasing. It delivers what everyone wants from a language: an elegant way to interface with software.

More important than Swift’s elegance, though, is its context. Programming languages rise and fall not on language quality but on more-practical factors: which libraries and tools are available, what the industry standard is, and what’s most likely to get you a job. Swift is the first place all these factors converge: a highly relevant platform, a sole platform owner with the will to impose a standard, a human-friendly toolset, and a newly-modern language.

Have you ever wanted to start programming on iOS? Today’s the day.

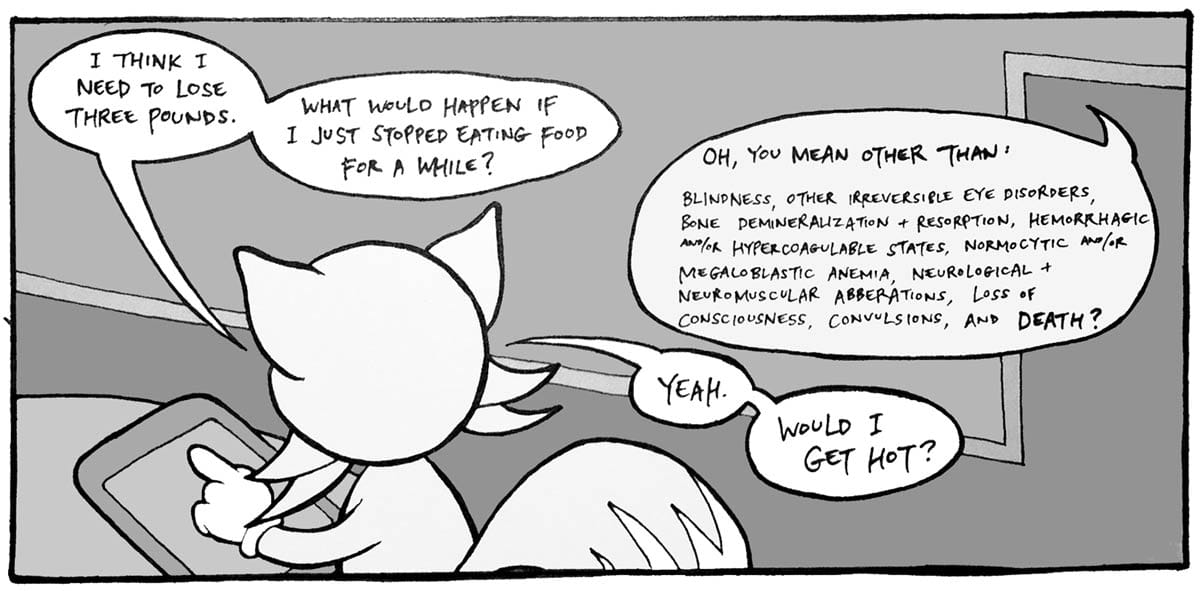

Starvation games: A (fox’s) guide to fasting metabolism

Thing practiced: storytime with canids

Tools used: D3.js, pen + paper, PubMed (references)

For 382 days, a twenty-seven-year-old man chose to consume nothing but fluids, salt, vitamins, and yeast. He survived, dropping from 456 to 180 pounds—and weighed in at 196 five years later.

Fasting is in vogue now, and not just among self-flagellants. Fans of intermittent fasting say hunger hones our bodies’ recovery mechanisms and preserves lean tissue while eliminating fat. Paleo Diet adherents—who argue that obesity, diabetes, and heart disease stem from the mismatch between our evolutionary history and the modern environment—remind us that humans evolved to thrive when meals were rare. So (how) do our bodies survive when we’re not eating?

All our energy derives from breaking carbon-carbon bonds, and we have various carbon backbones available to burn. Glycogen, a branched carbohydrate, is our fast fuel: all our cells maintain a stash, and all can quickly mobilize it as sugar when needed. Stockpiling glycogen is inefficient, though, because we package it with water. (We think of carbohydrates as yielding 4 calories per gram, but we reap only 1-2 calories per gram of hydrated glycogen.)

A larger store of potential energy is in body proteins, which are linear polymers of amino acids. Proteins serve critical functions (skin, ligaments, muscle, enzymes, hormones), so we preserve them as much as possible. Though proteins can and do get broken down for energy, catabolizing more than about half will kill us.

Our main fuel store is fat, which is both efficient to store (because it’s not hydrated) and otherwise useless (meaning it’s easily expendable). Theoretically, a man with 20% body fat could survive off his fat layer for weeks.

In practice, though, calories from different sources aren’t interchangeable. Our bodies don’t literally burn fuel, but instead send it through energy-harvesting pathways that accept specific inputs. Some cell types lack the machinery needed to process certain molecules; for example, red blood cells don’t have mitochondria, so they can use only glucose (sugar) for energy. Other tissues can’t use certain fuels because they’re not physically accessible; for instance, the brain can’t burn fat because fatty acids can’t permeate the blood-brain barrier. And our bodies can interconvert our energy stores in only limited ways: we can convert glucose to fat, and protein to glucose or fat, but we can’t convert fatty acids to either glucose or protein.

When we’re starving, our bodies prioritize two things: maintaining an uninterrupted flow of energy to the brain and spinal cord, and preserving as much body protein as possible. Normally, our brains run solely on glucose. During starvation, though, glucose is precious: we can create it only by destroying body protein. To conserve protein, the brain reduces its glucose usage by burning the ketone bodies β-hydroxybutyrate and acetoacetate as fuel. Likewise, muscle tissue and major organs like the heart and liver usually burn a mixture of glucose and fatty acids, but switch to a ketones-added, lower-glucose, higher-fat mixture.

During the first phase of fasting, as we run down our short-term glycogen stores, the liver ramps up its ketone production to allow us to make these fuel usage transitions.

These metabolic transitions spare vital organ and muscle proteins, but we do still burn some protein—both to produce the minimal glucose some tissues still need and to provide the four-carbon intermediates required to catabolize ketones and fat. Throughout this “steady” state of fasting, which can last weeks to months, our bodies gradually cannibalize our protein stores. Eventually, enough protein is consumed that our organ systems lose function and we die, usually when our respiratory muscles fail. (We die of starvation even if we’ve retained large stores of fat.)

So, what does any of this have to do with a plan to lose weight? Not much, I hope. It’s tempting to think that 20 or 30 days of buckling down and getting hardcore will turn us into perfect, beautiful people. If only.

Scraps

Things practiced: calming down, making food, hanging out

Insomniac cooking

Food: Provençal

Thing practiced: making food

Trying gradually to expand my cooking style from nonexistent to unsophisticated.

Provençal beef stew (Daube de bouef)

Eggplant custard (Aubergines à la Provençale)

Onion panade (Panade à l’oignon au gratin)

Zucchini fans (Courgettes en éventail)

Garlic chicken (Poulet aux 40 gousses d’ail)

Pork chops with Dijon & apples (Côtes de porc à la moutarde & pommes)

Photos: San Francisco

Photos: Around LA

Thing practiced: photography

Tools used: Canon Rebel T3i, Canon 40mm f/2.8 lens

El Matador SB (Malibu) – f/3.5, 1/1600 s, ISO 100

El Matador SB (Malibu) – f/4, 1/250 s, ISO 100

Angeles Crest Highway (Mount Wilson) – f/4, 1/3200 s, ISO 100

Angeles Crest Highway (Mount Wilson) – f/4, 1/3200 s, ISO 100

Angeles Crest Highway (Mount Wilson) – f/4, 1/2000 s, ISO 100

Getty Center (Brentwood) – f/2.8, 1/40 s, ISO 3200

Getty Center (Brentwood) – f/2.8, 1/1000 s, ISO 100

Getty Center (Brentwood) – f/2.8, 1/1000 s, ISO 100

Lake Shrine (Pacific Palisades) – f/4.5, 1/120 s, ISO 400

Lake Shrine (Pacific Palisades) – f/4.5, 1/160 s, ISO 250

Piano: Beethoven sonata no. 14 in C# minor (Moonlight), mvt. 3: Presto agitato

Thing practiced: piano

Tool used: a piano

I used to play the piano (somewhat haphazardly) as a kid, but I abandoned all music-related activities after damaging my third-favorite finger five years ago. Recently, though, I’ve been scrabbling to grasp whatever wispy skills I can muster, so I try to play whenever I’m near a piano. Which is rarely.

Turns out, I kinda suck. But that’s what practice is for, right?

Cards

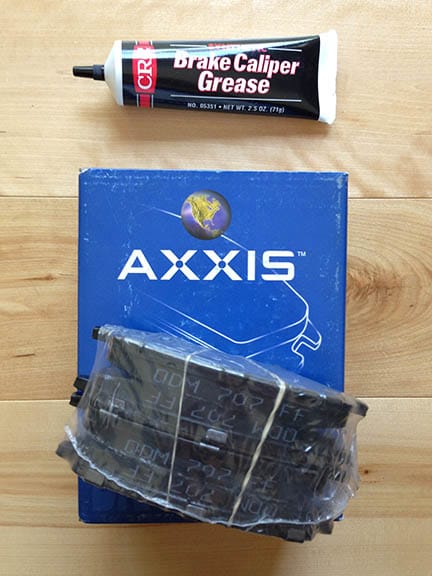

Chores: Mazda MX-5 maintenance

Project Euler: #137, Fibonacci golden nuggets

Thing practiced: math

Tools used: A Friendly Introduction to Number Theory, Python

—no one ever

$$ {A_F}(x) = x{F_1} + {x^2}{F_2} + {x^3}{F_3} + \ldots \tag{1} $$Consider the infinite polynomial series

where Fk is the kth term in the Fibonacci sequence (1, 1, 2, 3, 5, 8, ...).

For every case where x is rational and AF(x) is a positive integer, we call AF(x) a golden nugget, because these are increasingly rare – for example, the 10th golden nugget is 74,049,690.

What is the 15th golden nugget?

Solution:

Infinite series are unwieldy, so first we replace (1) with a closed-form version (derived here):

$$ {A_F}(x) = \frac{x}{1 - x - {x^2}} \tag{2} $$

We need AF(x) to be a positive integer, which we’ll call n. So, rearranging (2), we get:

$$ {A_F}(x) = n = \frac{x}{1 - x - {x^2}} $$ $$ n{x^2} + (n + 1)x - n = 0 \tag{3} $$

This is a standard quadratic equation with solutions

$$ x = \frac{-(n + 1) \pm \sqrt{(n + 1)^2 + 4n^2}}{2n} \tag{4} $$

As long as the square root portion of (4) is an integer, x will be rational. We can solve for this case by calling this portion m (making the discriminant m2):

$$ m^2 = (n + 1)^2 + 4{n^2} = 5{n^2} + 2n + 1 $$

and doing some algebra we get:

$$ 5m^2 = 25n^2 + 10n + 1 + 4 = (5n + 1)^2 + 4 $$ $$ (5n + 1)^2 - 5m^2 = -4 \tag{5} $$

Now, if we substitute p = 5n + 1, then (5) becomes a Pell-like equation:

$$ p^2 - 5m^2 = -4 \tag{6} $$

This means that we can find solutions to (6) by finding solutions to the corresponding unit-form Pell equation x2 - 5y2 = 1 and transforming them.

To compute the solutions, we use the technique described here. We first identify the fundamental solutions to (6). Then, we transform these into solutions of the unit-form equation by the identity (pi2 - 5mi2)(xi2 - 5yi2) = -4. From these, we can generate subsequent solutions, then transform them back into solutions to (6) by the same identity. Finally, we re-substitute our original n = AF(x) back in to find the golden nuggets.

This gives us the answer: 1,120,149,658,760.

Early morning doodles

Waiting-around doodles

Idle doodles

Thing practiced: drawing

Tools used: colored pencils, paper

I like seeing the doodles people make when talking on the phone at a desk. Some people sketch the things around them (their hand, the Shift key, a plant), some people draw geometric patterns, some people just write down individual words and put boxes around them. When I was a kid we used to spend class passing notes with terrible stick-figure illustrations of pretty much nothing, but it was fun, and idle phone doodling evokes the same feeling. Which is irrelevant, except that I spent tonight’s on-hold time with Comcast doodling:

Institutional politics

Thing practiced: data visualization, sort of

The Supreme Court is considering two big same-sex marriage cases next week—Tuesday’s case (3/26) decides the constitutionality of Proposition 8, which bans gay marriage in California, and Wednesday’s case (3/27) covers the federal Defense of Marriage Act, which defines marriage in the U.S. as exclusively between a man and a women. ☠️

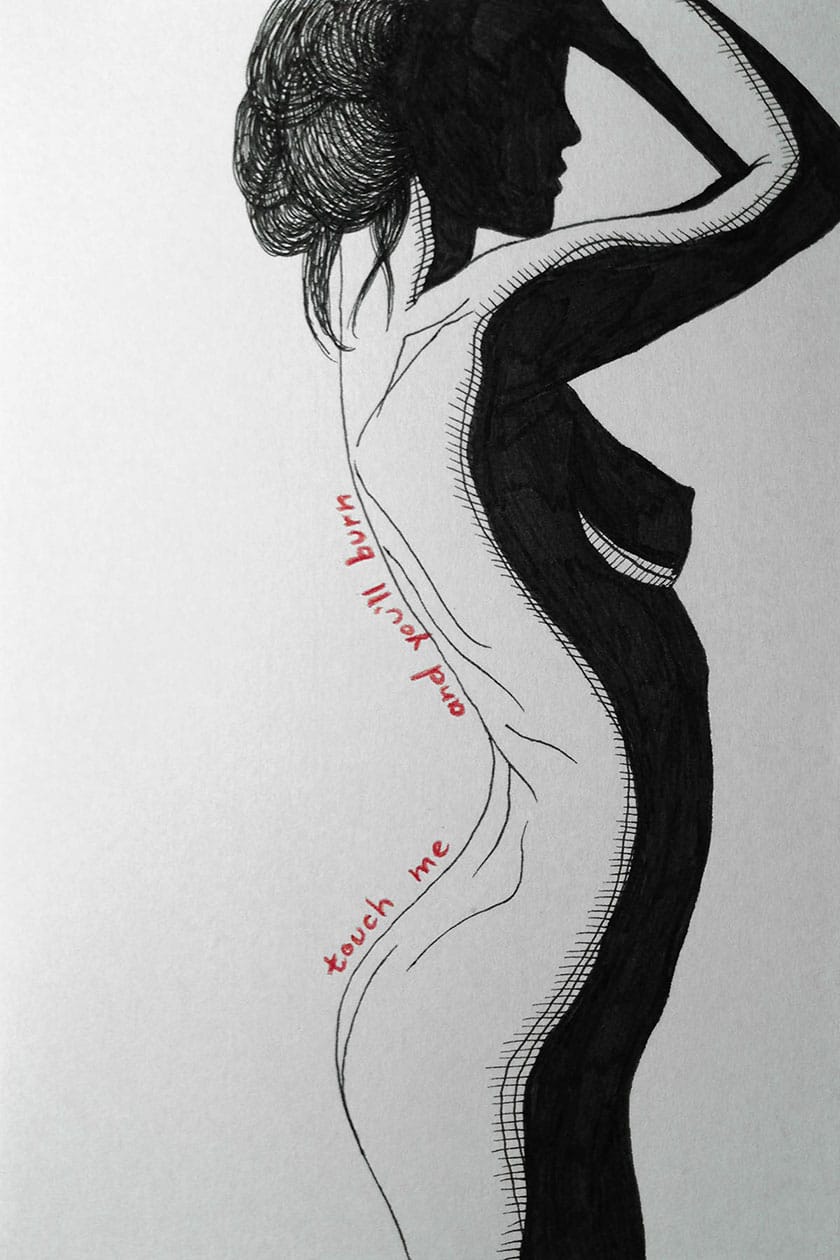

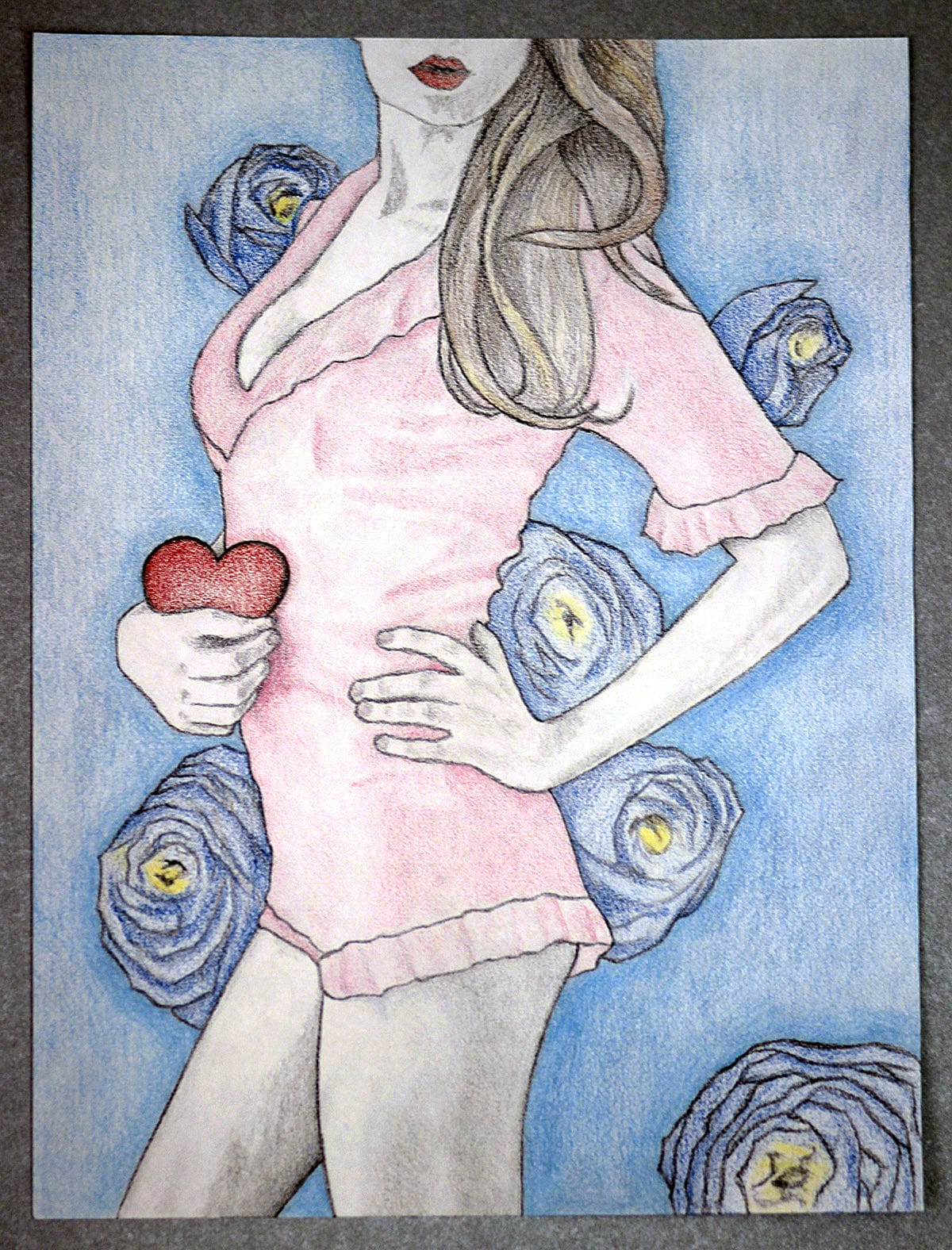

Sketch

Thing practiced: drawing

Tools used: sketch paper, colored pencils, pencil, eraser

,

,